Privacy Please is Watch Porn Story Episode 10 full videoan ongoing series exploring the ways privacy is violated in the modern world, and what can be done about it.

Amazon's Alexa can feel like a form of magic. By merely speaking it into the universe, users can conjure up-to-the minute weather reports from far-off lands, summon physical goods to be same-day rushed to their doors, and even get medical advice. But as with most magic tricks, when it comes to Alexa, it's worth paying attention to just who, exactly, is behind the curtain.

Because, despite what many people may assume, with Alexa-enabled devices like the Echo, there is very much someone behind the curtain. Or, to be more precise, many someones. As with most forms of modern "smart AI," Alexa depends on real humans listening in on a share of conversations and transcribing those requests.

Amazon calls this "supervised machine learning," and rather blandly describes strangers being paid to creep on its customers as "an industry-standard practice where humans review an extremely small sample of requests to help Alexa understand the correct interpretation of a request and provide the appropriate response in the future."

Put another way, your personal questions, doubts, and fears spoken aloud as if no one was listening may have found themselves in the hands of a group of people paid to do exactly that.

What truth do you let out when you believe no one is watching?

Thankfully, there's something you can do about it that doesn't involve taking a hammer to your smart assistant (though, if you do go that route, please recycle the smashed Echo afterward).

Always listening. Credit: Joby Sessions / getty

Always listening. Credit: Joby Sessions / getty Unless you take the time to dig through your settings and actively opt out, your Alexa-enabled device records and stores your questions and conversations whenever it hears a so-called wake word like "Alexa."

In some instances, real humans listen to and transcribe those recordings with the goal of improving Amazon's voice-recognition software.

Or at least that's how it's supposed to work. Alexa has been known to record people and rooms even when there's no wake word spoken intentionally — or spoken at all. It happens so often, in fact, that Amazon has its own term for the privacy-invading habit: "false wakes."

"In some cases, your Alexa-enabled device might interpret another word or sound as the wake word (for instance, the name 'Alex' or someone saying 'Alexa' on the radio or television)," explains the company.

In these disturbing situations, complete strangers can end up with audio recordings of your Alexa chats. Those chats might be innocuous things like asking for the weather forecast, yes, but also potentially private information like asking for directions to the nearest Alcoholics Anonymous.

That's because Amazon pays people to listen to and transcribe a subset of Alexa requests with the stated goal of improving the service.

In 2019, Bloomberg reported on a group of contractors who had this very job. One of those reviewers told the publication that, in addition to their other work, those contractors each transcribed around 100 recordings each day that appeared to be the result of false wakes. Those false wake recordings included what they thought to be a recording of sexual assault as well as banking details.

To make matters worse, Bloomberg later reported that some Amazon employees listening to and transcribing Alexa recordings could see where those customers lived. Once you have someone's location data, it's pretty easy to figure out their real name.

This is all in addition to the fact that your recordings are kept on Amazon's servers for later reference. You can ask Amazon to delete those records, but even if you do, the company keeps a copy of the written transcript for 30 days.

In other words, Amazon Echo devices pose a potential privacy threat. Thankfully, there's something you can do about it.

Turn off the lights on invasive tech. Credit: Chloe Collyer / getty

Turn off the lights on invasive tech. Credit: Chloe Collyer / getty Amazon's Echo and other Alexa-enabled devices hoover up your personal information by default. That means that unless you dig around in those devices' settings and make an affirmative choice to say "no, thank you," in the eyes of Amazon you've effectively said "yes, please."

Of course, however, that's not true. As Apple's recent update to iOS demonstrated, when presented with the choice, very few people will opt in to surveillance. While that's often not a choice that's clearly presented to people, it doesn't mean it isn't one you have.

Log into your Amazon account.

Go to the Alexa privacy settings page.

Select the "Privacy Settings" tab in the top center of the page.

Under "View, hear, and delete your voice recordings," select "Review voice recordings."

Where it says "Today," hit the drop-down menu and select "All History."

Select "Delete all of my recordings."

Log into your Amazon account.

Go to the Alexa privacy settings page.

Select the "Privacy Settings" tab in the top center of the page.

Under "Review and manage smart home devices history," select "Manage Your Alexa Data."

Under "Choose how long to save recordings," select "Don't save recordings," then hit "Continue."

Log into your Amazon account

Go to the Alexa privacy settings page.

Select the "Privacy Settings" tab in the top center of the page.

Under "Manage how you help improve Alexa," select "Manage how you help improve Alexa."

Under "Help improve Alexa," deselect "Use of voice recordings."

When speaking with Alexa, it's important to remember that the tool is more than just a disembodied voice in cloud, swooping in to magically answer your questions.

SEE ALSO: How to make your smart TV a little dumb (and why you should)

The digital assistant that's become synonymous with Amazon Echo devices is billed by the data-hungry conglomerate as an educator, surrogate caregiver, and all-around helping hand. And the 100-million plus Alexa-capable devices sold by Amazon are a testament to the fact that, for rather large section of the global populace, that message resonates.

Now is your chance to send a different message straight to Amazon itself, and in the process, let the silence of your newly deleted Amazon records echo in its executives' ears.

Topics Alexa Amazon Amazon Echo Cybersecurity Privacy

One Night Only! The Implosion of the Riviera, Monaco Tower

One Night Only! The Implosion of the Riviera, Monaco Tower

Monkeypox vaccine: Who can get one and how does it work?

Monkeypox vaccine: Who can get one and how does it work?

On the Enduring Appeal of Frederick Ashton’s La Fille Mal Gardée

On the Enduring Appeal of Frederick Ashton’s La Fille Mal Gardée

Best Apple TV+ deal: Get 3 months for $2.99 monthly

Best Apple TV+ deal: Get 3 months for $2.99 monthly

Dentist Poem

Dentist Poem

Best Amazon Fire deal: Get the Amazon Fire HD 10 for $30 off

Best Amazon Fire deal: Get the Amazon Fire HD 10 for $30 off

Ticketmaster's Platinum tickets are hurting fans

Ticketmaster's Platinum tickets are hurting fans

Best robot vacuum deal: Get the Roborock Q5 Max for 53% off at Amazon

Best robot vacuum deal: Get the Roborock Q5 Max for 53% off at Amazon

TikTok recipe for air fryer chicken skewers is surprisingly delicious and simple

TikTok recipe for air fryer chicken skewers is surprisingly delicious and simple

Airship: Photos from Guyana

Airship: Photos from Guyana

In Moscow, Constructivist Marvels Face Demolition

In Moscow, Constructivist Marvels Face Demolition

Best WiFi extender deal: The TP

Best WiFi extender deal: The TP

Last Exit: Luc Sante Moves Out

Last Exit: Luc Sante Moves Out

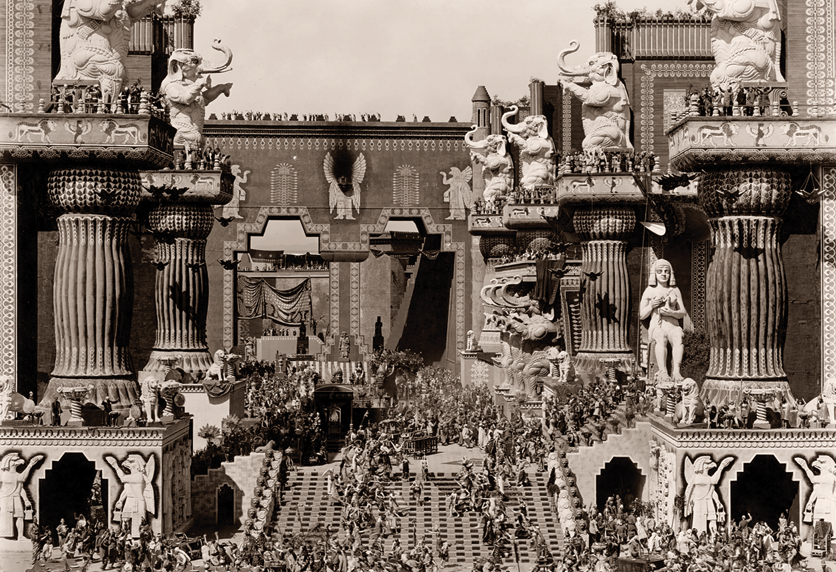

D. W. Griffith’s “Intolerance” Changed Life Outside the Movies

D. W. Griffith’s “Intolerance” Changed Life Outside the Movies

Michael Herr, 1940

Michael Herr, 1940

NYT mini crossword answers for May 12, 2025

NYT mini crossword answers for May 12, 2025

Get a eufy Security video doorbell for under $70

Get a eufy Security video doorbell for under $70

David Hockney’s Improbable InspirationsThe Book JeanA Siren in a Paper Sleeve by Christopher KingRedux: The Taxman ComethA Gentler Reality TelevisionStaff Picks: Kendrick, Cardi Covers, and Cautionary TalesCooking With Pather PanchaliWriters’ Fridges: Leslie JamisonBoy Genus: An Interview with Michael KuppermanNietzsche Wishes You an Ambivalent Mother’s Day by John Kaag and Skye C. ClearyPhilip Roth, 1933–2018Ten Superstitions of Writers and ArtistsThe Book I Kept for the CoverPhilip Roth, 1933–2018Staff Picks: Sharp Women and Humble TurtlesGertrude Stein's Mutual Portraiture SocietyThe Unfortunate Fate of Childhood DollsOn Beyoncé, Beychella, and Hairography by Lauren Michele JacksonThe Life and Times of the Literary Agent Georges BorchardtLilac, the Color of Fashionable Feelings Game of Thrones trailer: 10 clues you missed in the new Season 7 promo Balenciaga's $1,100 fake paper bag is already sold out 'Rogue One' is coming to Netflix this summer Smashburger adds Apple Pay payments and loyalty program How to get a job at: Getty Images Chicago's new Apple store has a MacBook Air for a roof Intel is bringing 5G, drones, and VR to the Olympics in 2018 Lucky couple received some great NSFW marriage advice on their anniversary J.K. Rowling revealed there are two Harry Potters These definitely real leaked emails show exactly why the Han Solo spin After 8 years, Owl City finally explains that weird lyric in 'Fireflies' The future of drone delivery depends on predicting the weather Cartoon Network's 'OK K.O.!' is a unique TV/video game collaboration What's coming to Netflix in July 2017 The USGS sent SoCal a quake alert on Wednesday for a tremor that actually occurred in 1925 The NBA Draft proves just how far men's fashion has come Finally, there's a parental control app that won’t cause a family feud FX should really accept our brilliant idea for 'Fargo' Season 4 Microsoft's new Windows Whiteboard app has reportedly leaked Sick of censoring content, China bans livestreaming altogether

1.921s , 10158.296875 kb

Copyright © 2025 Powered by 【Watch Porn Story Episode 10 full video】,Fresh Information Network